The emergence of text-to-image services powered by artificial intelligence has revolutionized content creation, raising the need to Detect Deepfakes Created by Text-to-Image Services effectively. However, this advancement also poses challenges, particularly in detecting deepfakes. These AI-generated images can be so realistic that distinguishing them from authentic visuals becomes increasingly difficult, underscoring the critical need to Detect Deepfakes Created by Text-to-Image Services. This article explores ten essential techniques to effectively Detect Deepfakes Created by Text-to-Image Services, providing a robust framework for understanding and addressing this growing challenge.

1. Reverse Image Search

Reverse image search is a powerful tool for identifying whether an image has been manipulated or generated by AI, contributing to the broader effort to Detect Deepfakes Created by Text-to-Image Services. By uploading the image to platforms like TinEye or Google Images, users can trace its origins and check for matches across the internet. This method often reveals if an image has been altered or generated from scratch.

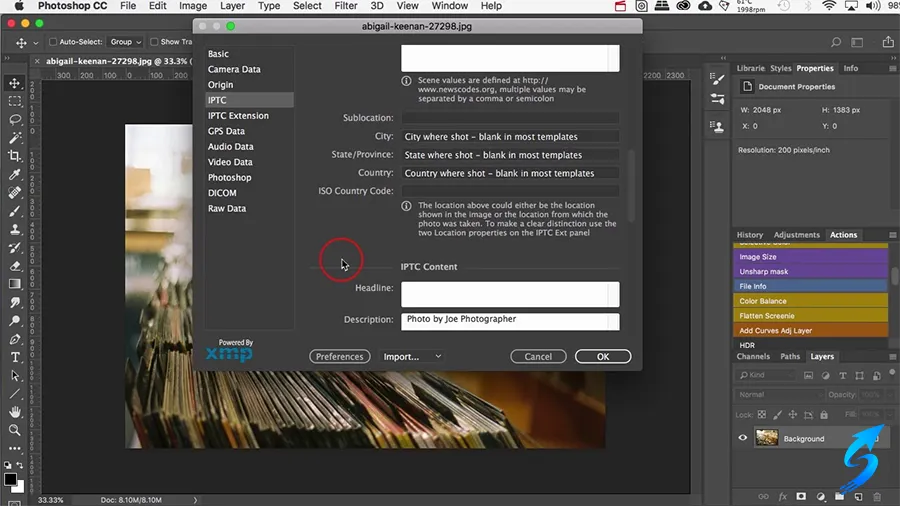

2. Metadata

Metadata refers to the information embedded in an image file, such as the camera model, date, and location. Examining metadata can provide clues about whether the image is authentic. AI-generated images often lack detailed metadata or include anomalies that suggest tampering. Tools like ExifTool can extract and analyze metadata to detect inconsistencies.

3. Inconsistent Perspective

AI-generated images sometimes exhibit flaws in perspective. Objects in the background might not align properly with those in the foreground, or the proportions might seem unrealistic. Observing these details can help identify deepfakes. Perspective issues are particularly common in complex scenes involving multiple elements.

4. Artifacts in the Fourier Domain

The Fourier domain analysis focuses on the frequency components of an image. AI-generated images may contain unique artifacts or patterns that are not present in real photographs. Tools that analyze the Fourier domain can detect these artifacts, making it easier to spot deepfakes. This method is highly effective for images created using diffusion models.

5. Issues with Hands and Text

AI-generated images often struggle with fine details, particularly hands and text. Hands might have extra fingers, unnatural shapes, or inconsistent orientations. Similarly, text in AI-generated images often contains misspellings or distorted fonts. Carefully examining these elements can be a quick way to identify deepfakes.

6. Authenticate’s Diffusion Model Deepfake Filter

Authenticate, a leading provider of deepfake detection tools, has developed a diffusion model deepfake filter. This tool specializes in identifying images generated using diffusion models by analyzing patterns and inconsistencies unique to these models. It offers a reliable way to detect deepfakes with high accuracy.

7. Authenticate’s Face GAN Deepfake Filter

Another innovation by Authenticate is its Face GAN deepfake filter. This tool focuses on detecting deepfakes created using Generative Adversarial Networks (GANs). It analyzes facial features, lighting, and other details to uncover signs of manipulation. This filter is particularly effective for identifying deepfake portraits.

For more: Fake Images Detection; Understanding Deep Fakes.

8. Inconsistencies in Shadows

Lighting and shadows in AI-generated images can often appear unnatural. For example, shadows might not align with the light source, or multiple light sources might create conflicting shadows. Observing these inconsistencies can help detect deepfakes. This technique requires a keen eye and attention to detail.

9. Position of the Eyes

The position and alignment of eyes in portraits can reveal deepfakes. AI-generated faces often have asymmetrical or misaligned eyes, especially in group photos. Analyzing these discrepancies can be an effective way to identify manipulated images.

10. Tools for Image Authentication

Utilizing advanced tools for image authentication can provide comprehensive analysis. Services like FotoForensics and Deepware Scanner are designed to identify signs of AI manipulation. These tools combine multiple detection methods to deliver reliable results.

Conclusion

As AI-generated images become more sophisticated, the importance of Detect Deepfakes Created by Text-to-Image Services techniques grows. Methods like analyzing metadata, observing inconsistencies in perspective and shadows, and leveraging advanced tools play a crucial role in identifying deepfakes. By staying informed and utilizing these strategies, individuals and organizations can better navigate the challenges posed by deepfakes created by text-to-image services.